Opening up to your readers – sharing data wealth to make better science

Xuan Yu in Adventures in the Critical Zone

May 23, 2016

Why model and with what data?

It has been stated that the pen is mightier than the sword. This adage has proven true for centuries as we moved from flickering candle lit homes to luminous rainbows of LED strip lights. As we move further into the 2nd generation of the information age, scientists are confronting technical challenges that will require a rethinking of the “pen”. In the spirit of a more open science, best practices for how scientists deal with data and models in research publication needs rethinking (Easterbrook, 2014).

A scientist throws a paper airplane with various types of data towards a group of other scientists. Illustration by Mathew New.

Data and models are fundamental to the scientific enterprise. We use the data-model relationship for everything, from planning our new research project, to designing and deploying field observations, and for testing our scientific hypotheses. To make sure models are on the right path, scientists constantly design and re-design experiments that produce data to evaluate the model capability, to reproduce the past and to predict the future. However, what if scientists are missing observations essential for making strong predictions? How do we know the scientists are building their models using appropriate data? Currently, it is the peer review process itself where approval or rejection of model-data strategies are tested. Nevertheless, we might ask the question: Do current publication practices support the most effective approach to assessing scientific observations and predictions?

Increasingly, in-depth research compels comparison of data from two or more disciplines. It is not uncommon for confederated research teams to tackle the same question from several different approaches. But a diversity of modeling techniques can hinder the accuracy and value of this important work. Effective team science must include building a “thought bridge” to connect disparate data, both for modeling purposes and to be accessible across scientific communities. A key question becomes, then: how do scientists understand, and share. Earth system data and models within the framework of community research experiments?

The hydrologic sciences provide a rich example of complex data and model resources. Hydrologic data includes water levels and fluxes for snow, streams, groundwater, lakes, vegetation, etc. Critical Zone Observatories have developed and curated many hydrologic datasets to understand physical, chemical, biological processes associated with water movement on, in, and above the Earth (Duffy, et al, 2014; Brantley et al., 2016).

These datasets have spatial (e.g. watershed, regional, continental) and temporal (e.g. seconds, seasonal, decades) characteristics. For example, mountain snowpack changes seasonally due to air temperature fluctuations. Coastal groundwater level varies sub-daily and seasonally due to tidal fluctuations and storm. Often, we install sensors to continuously collect hydrologic data across different locations. We also collect water samples from the field and analyze their biological and chemical properties in the lab.

Hydrologic models conceptualize and simplify representations of physical processes governing the movement of water on, in, and above the Earth. Prediction of runoff is one of most important functions of many hydrologic models. Assess the dangers of a flood, sustainable management of irrigation water, and controlling pollution in streams all rely on hydrologic modeling results. Hydrologic models usually require meteorological data to be input and simulate runoff at the outlet of a watershed. Historic runoff data are also required to calibrate model parameters.

Recent years have witnessed the emergence of complex surface-subsurface models that account for the spatial patterns of vegetation, soil properties, and geological formation. While detailed, such complex datasets and model structures introduce significant uncertainties in a model’s ability to make accurate predictions. Even more problematic is that the model is difficult to replicate, and simulations from the published papers of another modeler are difficult to reuse.

Data and models with a purpose

The goal of researchers is to have models and data available and useful to broad audiences for a wide variety of applications. For example, integrated hydrologic models can be useful for high school teachers interested in showing environmental science students watershed issues, working professionals at a consulting firm or even typical people living near a lake or river.

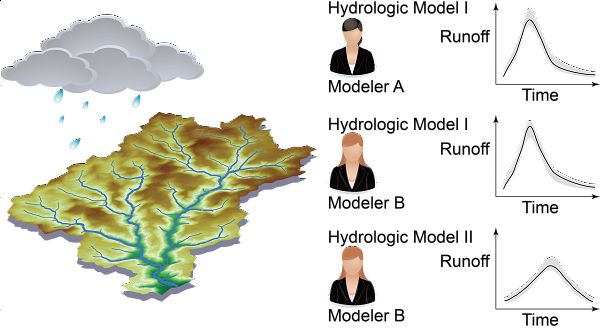

Currently, CZOs have been built up their cyberinfrastructure for data and model management. Such data management systems have substantially facilitated researchers to share data and knowledge across discipline. However, at the level of individual study, many intermediate data and software are still not preserved in a reusable way along with each publication, which may result in misinterpretation and irreproducibility. For example, in a hydrologic modeling study, given the same CZO data, simulation results can be vary widely across different groups or individuals, depending on the model that is used. It has been realized that what might be trivial for one modeling group may be very important for another.

Such difference between groups or individuals affects data sharing and research reproducibility. The image above shows examples of reproducibility of hydrologic modeling results. Modeler A simulated the runoff in the river during and after a rainfall event by the Hydrologic Model I. Reproducibility exercise happens when: another modeler wants to learn the same hydrologic model, or another modeler wants to compare different models (Easterbrook, 2014). Therefore data processing pipelines and subjective differences among individual modelers can pose major challenges in science reproducibility.

Community research and engagement

In the spirit of addressing a new role for research publications, we (Susquehanna Shale Hills Critical Zone Observatory researchers) have developed a modern publication strategy in a new study with collaborators at INRS (Institut national de la recherche scientifique) Canada. The study found that computational workflows are effectively preserved directly within each research article. By applying modern publication strategies, a geoscience paper will not only tell the story through traditional publication methods (e.g. text, figures, and tables) but also make digital research outputs (e.g., software, raw data, and final data) persistent, linked, user-friendly, and sustainable. As a result, a potentially large group of readers from scientific communities, governments, and the public will be able to understand the knowledge and reuse the data for their own purposes.

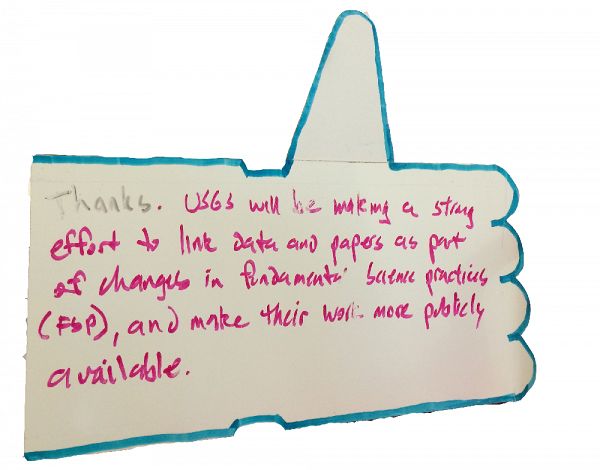

To engage the modern publication strategies at broader communities, a lecture series enabled by NSF EarthCube Distinguished Lecturer Program was initiated in Spring 2016. These lectures explain one project, Ontosoft, that is being developed as part of NSF’s EarthCube Initiative, which has a long-term goal of opening data and connecting scientific research. Lectures from this series have been presented at University of Delaware, University of Pennsylvania, USGS, University of Maryland, and Michigan State University. The feedback during the lectures demonstrated that most scientists were convinced on the importance of data preservation, though there were specific difficulties on data management from case to case. As more conversation is generated, such as EarthCube Distinguished Lecture, new thoughts will be developed to shape a fair and open research culture.

An anonymous comment after the EarthCube Distinguished Lecture at USGS on world water day of 2016. The lecture tour was supported by NSF EarthCube Distinguished Lectures Program. More details of the lecture were kept by the #whyearthcubedistinguishedlecture hashtag on twitter.

Have any questions about water modelling, please contact Dr. Xuan Yu directly.

If you have any topics you would like explored, send them our way at Askcriticalzone@gmail.com.

References

Brantley, S. L., DiBiase, R. A., Russo, T. A., Shi, Y., Lin, H., Davis, K. J., Kaye, M., Hill, L., Kaye, J., Eissenstat, D. M., Hoagland, B., Dere, A. L., Neal, A. L., Brubaker, K. M., and Arthur, D. K (2016): Designing a suite of measurements to understand the critical zone. Earth Surface Dynamics 4: 211-235. DOI: 10.5194/esurf-4-211-2016

Duffy, C., Y. Shi, K. Davis, R. Slingerland, L. Li, P. L.

Sullivan, Y. Goddéris, S. Brantley, (2014), Designing a Suite of Models to Explore Critical Zone Function, Procedia Earth and Planetary Science, 10, 7 – 15.

Easterbrook, S. M. (2014), Open code for open science?, Nat. Geosci., 7(11), 779–781, doi:10.1038/ngeo2283.

http://video.esri.com/watch/994/geodesign-as-a-cyberlearning-game-interactive-hydrologic-modeling-in-the-classroom

McNutt, M., K. Lehnert, B. Hanson, B. A. Nosek, A. M. Ellison, J. L. King (2016), Liberating field science samples and data, Science, 351(6277), 1024-1026.

National Science Foundation (2015), Public Access Plan: Today’s data, tomorrow’s discoveries. http://www.nsf.gov/pubs/2015/nsf15052/nsf15052.pdf

Yu, X., C. J. Duffy, A. N. Rousseau, G. Bhatt, Á. Pardo Álvarez, and D. Charron (2016), Open science in practice: Learning integrated modeling of coupled surface-subsurface flow processes from scratch, Earth and Space Science, DOI: 10.1002/2015EA000155

A scientist throws a paper airplane with various types of data towards a group of other scientists. Illustration by Mathew New.

Author, Xuan Yu, maintaining a data logger at a tidal drain. Photo credit: Joshua J. LeMonte.

Different types of reproducibility of hydrologic modeling results.

An anonymous comment after the EarthCube Distinguished Lecture at USGS on world water day of 2016. The lecture tour was supported by NSF EarthCube Distinguished Lectures Program. More details of the lecture were kept by the #whyearthcubedistinguishedlecture hashtag on twitter.

Guest Author

Xuan Yu

CZO GRAD STUDENT. . Specialty: Wetland hydrology, hyporheic hydrology, Distributed watershed modeling for ecosystem services

Data Management / CyberInfrastructure Hydrology Modeling / Computational Science RESEARCH DATA MODELS

COMMENT ON "Adventures in the Critical Zone"

All comments are moderated. If you want to comment without logging in, select either the "Start/Join the discussion" box or a "Reply" link, then "Name", and finally, "I'd rather post as a guest" checkbox.

ABOUT THIS BLOG

Justin Richardson and his guests answer questions about the Critical Zone, synthesize CZ research, and meet folks working at the CZ observatories

General Disclaimer: Any opinions, findings, conclusions or recommendations presented in the above blog post are only those of the blog author and do not necessarily reflect the views of the U.S. CZO National Program or the National Science Foundation. For official information about NSF, visit www.nsf.gov.

Explore Further